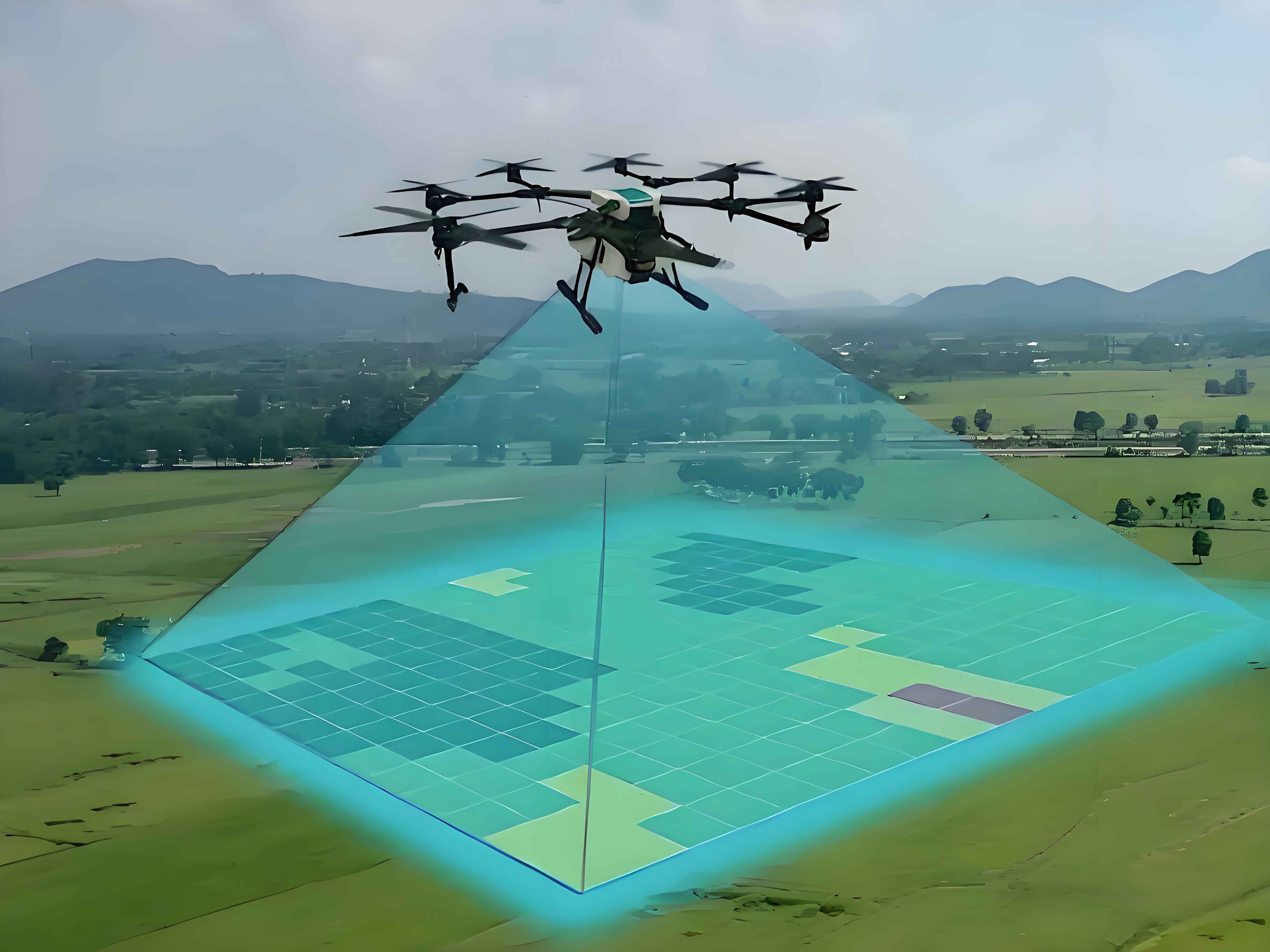

Traditional aerial surveying faces significant limitations in flight altitude and camera perspective, hindering high-resolution, multi-angle image acquisition in complex terrains. These constraints directly compromise the accuracy and efficiency of surveying and modeling workflows. Surveying drones, or surveying UAVs, overcome these barriers through flexible low-altitude operations and multi-sensor integration. This study proposes a Multi-source Attention Edge-constrained U-Net Convolutional Neural Network (MAEU-CNN) model, integrating UAV oblique photography with multi-scale feature extraction and deep learning to enhance geospatial data processing.

Methodology

Image Preprocessing

Surveying UAVs capture oblique imagery that requires noise reduction and enhancement. Key preprocessing steps include:

Grayscale Conversion:

$$Gray = \alpha_1 \times R + \alpha_2 \times G + \alpha_3 \times B$$

where $R$, $G$, $B$ denote RGB channels and $\alpha$ represents quantization weights.

Binarization:

$$f(x) = \begin{cases} 0, & x < N \\ 1, & x \geq N \end{cases}$$

Threshold $N$ separates foreground/background pixels.

Histogram Equalization:

$$P(r_k) = \frac{n_k}{N}, \quad s_k = \sum_{i=0}^{k} P(r_i)$$

This expands dynamic range for contrast enhancement.

Intensity Stretching:

$$g(x,y) = \frac{f(x,y) – \text{min}}{\text{max} – \text{min}} \times 255$$

normalizes pixel values across datasets.

MAEU-CNN Architecture

The model integrates four innovations for surveying UAV data:

- Multi-scale Feature Extraction: Parallel convolutions capture granular/textural patterns

- Edge Constraint Module: Boundary regularization loss:

$$\mathcal{L}_{edge} = \sum \|\nabla Y_{pred} – \nabla Y_{true}\|^2$$ - Convolutional Block Attention (CBAM):

Channel attention: $M_C(F) = \sigma(MLP(\text{AvgPool}(F)) + MLP(\text{MaxPool}(F)))$

Spatial attention: $M_S(F) = \sigma(f^{7\times7}([\text{AvgPool}(F); \text{MaxPool}(F)]))$ - U-Net Fusion: Encoder-decoder structure with skip connections

| Module | Function | Parameters |

|---|---|---|

| Encoder Blocks | Feature downsampling | 5×5 conv, ReLU |

| Multi-scale Fusion | Feature aggregation | Kernel sizes: 3,5,7 |

| CBAM Decoder | Attention-guided upsampling | Transposed conv |

| Edge Constraint | Boundary preservation | Laplacian filter |

Experimental Analysis

Testing used WHU Aerial and SpaceNet datasets on NVIDIA GTX2080Ti hardware. Performance metrics:

| Metric | U-Net | SegNet | MAEU-CNN | Improvement |

|---|---|---|---|---|

| IoU | 0.85 | 0.92 | 0.98 | +15.3% vs U-Net |

| Ambiguity | 0.21 | 0.18 | 0.12 | -42.9% vs U-Net |

| Structure | U-Net | SegNet | MAEU-CNN |

|---|---|---|---|

| Residential | 625 | 517 | 446 (-38.5%) |

| Industrial | 590 | 482 | 412 |

| Medical | 568 | 451 | 376 (-33.8%) |

| Cultural | 612 | 503 | 428 |

The surveying UAV-optimized MAEU-CNN demonstrates superior generalization across terrains. Attention mechanisms reduced feature ambiguity by prioritizing geomorphological elements, while edge constraints preserved critical infrastructure boundaries.

Conclusion

MAEU-CNN significantly advances surveying drone capabilities through:

1. Multi-scale fusion adapting to terrain complexity

2. Attention mechanisms reducing occlusion errors

3. Edge preservation maintaining structural fidelity

4. Computational efficiency enabling real-time processing

Surveying UAV systems integrating this model achieve sub-decimeter accuracy in heterogeneous environments, demonstrating 15.3% higher IoU and 42.9% lower ambiguity than conventional approaches. Future work will optimize model compression for edge-computing surveying UAV deployments.