In recent years, I have been deeply involved in researching autonomous control systems for multi-unmanned aerial vehicles (UAVs), with a particular focus on formation drone light show applications. These applications require precise coordination and robust control algorithms to achieve stunning visual displays in the sky. However, validating the performance of formation controllers has often been limited to software simulations, which lack the rigor and practical insights needed for real-world engineering. To address this gap, I have developed a novel, low-cost hardware-in-the-loop (HIL) test bed system specifically designed for testing formation drone light show controllers. This system enables comprehensive evaluation under various conditions, leveraging both physical and virtual components to mimic real-flight scenarios. In this article, I will detail the design, implementation, and experimental validation of this test bed, emphasizing its relevance to formation drone light show technologies.

The core motivation behind my work stems from the growing demand for reliable and scalable formation drone light show systems. These systems involve multiple drones flying in close-coupled formations to create dynamic patterns and lights, often for entertainment, advertising, or artistic purposes. Achieving such formations requires advanced control algorithms that can handle uncertainties, disturbances, and real-time coordination. Traditional testing methods rely heavily on software-in-the-loop simulations, which may not capture the full complexity of hardware interactions. Therefore, I aimed to create a HIL test bed that integrates actual flight control subsystems with virtual prototypes, providing a more realistic and cost-effective platform. This approach allows for iterative testing of formation drone light show controllers, ensuring they meet performance criteria before deployment in actual shows.

My test bed system is structured around three main subsystems: a flight control subsystem (FCS) based on digital signal processor (DSP) technology for the leader drone, a flight control subsystem-virtual prototype (FCS-VP) based on Statemate technology for follower drones, and a ground test subsystem for data relay and monitoring. This architecture supports a leader-follower formation model, which is commonly used in formation drone light show applications to maintain geometric patterns. The leader drone executes a pre-defined flight path, while follower drones adjust their trajectories in real-time based on formation control laws. By incorporating hardware elements like sensors and actuators, along with software-based virtual prototypes, the test bed bridges the gap between simulation and physical deployment, making it ideal for evaluating formation drone light show controllers.

In the following sections, I will elaborate on each subsystem’s design, the mathematical models governing formation drone light show geometries, and the experimental results obtained from the test bed. I will also include tables and equations to summarize key aspects, ensuring a thorough technical exposition. Throughout this discussion, the term “formation drone light show” will be frequently highlighted to underscore its application context. The goal is to demonstrate how this HIL test bed can validate controller performance, ultimately advancing the reliability and innovation of formation drone light show systems.

System Architecture and Design

The overall framework of my hardware-in-the-loop test bed for formation drone light show applications is illustrated in the diagram below. It consists of interconnected subsystems that emulate multi-drone operations in a controlled environment. The leader drone’s FCS is implemented using DSP-based hardware, which processes sensor data and executes flight control algorithms. The follower drones are represented by FCS-VP, a virtual prototype developed with Statemate tools to simulate flight dynamics and control responses. The ground test subsystem facilitates communication between these components, transmitting flight data and enabling real-time monitoring. This setup allows for extensive testing of formation drone light show controllers under various scenarios, such as wind disturbances or sensor failures.

The leader FCS is built around a flight control computer (FCC) that utilizes DSP technology for high-speed computation. The hardware includes modules for analog input (AI), analog output (AO), digital input (DI), digital output (DO), communication (COMM), power management (POWER), complex programmable logic (CPLD), and memory (RAM and NVRAM). These modules are integrated to handle navigation, control law solving, and actuator commands. The software architecture follows a modular design, with a main loop executing every 20 milliseconds to process sensor inputs, compute control outputs, and communicate with the ground station. This design ensures real-time performance, which is critical for formation drone light show applications where timing and synchronization are paramount.

| Module | Function | Key Specifications |

|---|---|---|

| DSP Processor | Core computation for control algorithms | High-speed processing, low latency |

| AI Module | Accepts analog sensor inputs (e.g., accelerometers) | Multi-channel, 12-bit resolution |

| AO Module | Outputs analog signals to actuators | Voltage range 0-5V, precision control |

| DI/DO Modules | Handles digital signals for switches and relays | Isolated inputs/outputs for safety |

| COMM Module | Manages data transmission via serial/network | Supports UDP/TCP protocols for real-time data |

| POWER Module | Regulates power supply to all components | Efficient conversion, over-voltage protection |

For follower drones, I developed the FCS-VP using Statemate, a model-based design tool. This virtual prototype mimics the behavior of physical drones, including flight dynamics, sensor emulation, and control logic. It is structured around activity charts and statecharts, which define continuous and discrete system behaviors. For example, the main control module (FC) encompasses states such as autonomous navigation, engine control, and mission execution, with transitions triggered by events like takeoff commands or GPS signal loss. The FCS-VP receives leader drone data from the ground test subsystem and uses formation control algorithms to adjust its trajectory. This approach reduces costs associated with physical drones while providing flexible testing for formation drone light show scenarios, as parameters can be easily modified to simulate different conditions.

The ground test subsystem comprises a test box, monitor box, and power distribution equipment. It runs software for ground testing (GT) and remote control/remote sensing (RCRS). The GT software uploads flight paths and commands to the leader FCS, while the RCRS software handles real-time telemetry and control instructions. This subsystem acts as a data bridge, ensuring seamless communication between the leader and follower components. In formation drone light show testing, it transmits leader attitude information to followers, enabling coordinated movements. The monitor box displays flight data visually, allowing for immediate observation of formation performance, which is essential for debugging and optimization.

Mathematical Model for Formation Drone Light Show

To achieve precise formations in drone light shows, I derived a geometric model based on a leader-follower approach. This model decomposes the formation control problem into horizontal and vertical tracking, using inertial coordinate frames as reference. Consider two drones: a leader (index i-1) and a follower (index i). The relative positions in the horizontal plane are defined by longitudinal and lateral distances, while vertical separation is handled independently. The geometry is captured by the following equations, which are fundamental for formation drone light show coordination.

Let $(x_i, y_i, z_i)$ denote the coordinates of drone $i$ in an inertial frame, and $V_{ix}$, $V_{iy}$, $V_{iz}$ represent its velocity components. The heading angle $\psi_i$ is given by:

$$ \cos \psi_i = \frac{V_{ix}}{\sqrt{V_{ix}^2 + V_{iy}^2}}, \quad \sin \psi_i = \frac{V_{iy}}{\sqrt{V_{ix}^2 + V_{iy}^2}} $$

The desired formation structure specifies relative distances: $d_f^c$ (longitudinal), $d_l^c$ (lateral), and $d_h^c$ (vertical). The actual relative distances are computed as:

$$ \begin{bmatrix} d_l \\ d_f \end{bmatrix} = \begin{bmatrix} \sin \psi_i & -\cos \psi_i \\ \cos \psi_i & \sin \psi_i \end{bmatrix} \begin{bmatrix} x_i – x_{i-1} \\ y_i – y_{i-1} \end{bmatrix}, \quad d_h = z_i – z_{i-1} $$

The kinematics of relative motion are then described by:

$$ \dot{d}_l = \frac{V_{ix} V_{(i-1)y} – V_{iy} V_{(i-1)x}}{\sqrt{V_{ix}^2 + V_{iy}^2}} + d_f \dot{\psi}_i $$

$$ \dot{d}_f = \sqrt{V_{ix}^2 + V_{iy}^2} – \frac{V_{ix} V_{(i-1)x} + V_{iy} V_{(i-1)y}}{\sqrt{V_{ix}^2 + V_{iy}^2}} – d_l \dot{\psi}_i $$

$$ \dot{d}_h = V_{iz} – V_{(i-1)z} $$

These equations form the basis for formation control laws. When the actual distances deviate from the desired values, the follower drone must adjust its actuators using a controller that robustly handles disturbances and uncertainties. In formation drone light show applications, maintaining exact distances is crucial for visual appeal and safety, especially in close-coupled formations where aerodynamic interactions occur. I designed a dual-loop controller that processes these kinematic equations to generate control signals for throttle and rudder, ensuring stable formation flight even under external perturbations like wind gusts.

To generalize for multiple drones in a formation drone light show, I extended this model to a chain of leader-follower pairs. Each follower tracks its immediate leader, propagating adjustments through the formation. The overall system can be represented in state-space form for control design. Let $\mathbf{x} = [d_l, d_f, d_h, \dot{d}_l, \dot{d}_f, \dot{d}_h]^T$ be the state vector, and $\mathbf{u}$ be the control input (e.g., actuator commands). The dynamics are:

$$ \dot{\mathbf{x}} = A \mathbf{x} + B \mathbf{u} + \mathbf{w} $$

where $A$ and $B$ are matrices derived from the kinematics, and $\mathbf{w}$ accounts for disturbances. The control objective is to minimize the error $\mathbf{e} = \mathbf{x} – \mathbf{x}^c$, where $\mathbf{x}^c$ is the desired formation state. I implemented a proportional-integral-derivative (PID) controller with gain tuning optimized for formation drone light show scenarios, though more advanced algorithms like model predictive control can also be tested on the HIL platform.

| Parameter | Symbol | Typical Value | Description |

|---|---|---|---|

| Longitudinal Distance | $d_f^c$ | 50 ft | Desired front-back separation |

| Lateral Distance | $d_l^c$ | 100-300 ft | Desired side-to-side separation |

| Vertical Distance | $d_h^c$ | 0-50 ft | Desired height difference |

| Velocity Range | $V$ | 50-200 ft/s | Operational speed for light shows |

| Control Frequency | $f_c$ | 50 Hz | Update rate for controller |

Experimental Validation and Results

I conducted a series of experiments using the HIL test bed to validate formation drone light show controllers. The tests involved both software simulations and hardware-in-loop runs, focusing on scenarios relevant to actual light shows, such as pattern transitions, disturbance rejection, and fault tolerance. The leader drone executed pre-programmed flight paths, while follower drones (virtual prototypes) adjusted in real-time based on the control algorithms. All data were logged and analyzed to assess performance metrics like tracking error and stability.

First, I performed MATLAB simulations to verify the controller design. A three-drone formation drone light show scenario was simulated over 50 seconds, with varying lateral distances to study aerodynamic coupling effects. The results showed that when the lateral separation was set below 300 ft (e.g., 100 ft), the formation became unstable, with drones oscillating and deviating by up to 900 ft. This highlights the importance of maintaining sufficient spacing in formation drone light show applications to avoid interference. In contrast, at 300 ft separation, the formation stabilized after an initial transient, with velocity differences reduced to 5 ft/s. The simulation equations were:

$$ \text{Tracking Error} = \sqrt{(d_l – d_l^c)^2 + (d_f – d_f^c)^2 + (d_h – d_h^c)^2} $$

which converged to near-zero values in the stable case. These findings informed the parameter choices for hardware tests, ensuring that the formation drone light show controllers are tuned for optimal performance.

Next, I implemented the controller on the HIL test bed. The leader FCS was loaded with a flight path including takeoff, climb, and level flight segments. The follower FCS-VP was initialized with formation distances of 316 ft lateral, 50 ft longitudinal, and 0 ft vertical, based on simulation insights. Upon leader takeoff, its attitude data were transmitted via the ground test subsystem to the follower, which then generated its own trajectory using the formation control law. I introduced disturbances such as side winds to test robustness—a common challenge in outdoor formation drone light show events. As shown in the results, the follower initially deviated but recovered within 8 seconds, demonstrating the controller’s ability to maintain formation integrity.

To quantify performance, I measured the root-mean-square error (RMSE) between desired and actual positions over multiple trials. The data are summarized in Table 3 below. The results indicate that the HIL test bed effectively validates formation drone light show controllers, with errors within acceptable limits for visual applications. Additionally, I tested a GPS failure scenario, where the follower’s GPS signal was lost for 20 seconds during flight. The controller defaulted to inertial navigation, and upon GPS recovery, the drone reacquired the formation path with minimal deviation. This underscores the reliability needed for large-scale formation drone light show performances, where signal dropouts can occur.

| Test Scenario | Disturbance Type | RMSE (ft) | Recovery Time (s) | Notes |

|---|---|---|---|---|

| Nominal Formation | None | 2.5 | N/A | Stable tracking, suitable for light shows |

| Side Wind Gust | Lateral force | 15.3 | 8.0 | Controller dampens oscillations quickly |

| GPS Signal Loss | Sensor failure | 20.1 | 20.0 | Formation restored after signal return |

| Close-Coupling Test | Aerodynamic interaction | 35.7 | 12.5 | High error at distances < 200 ft |

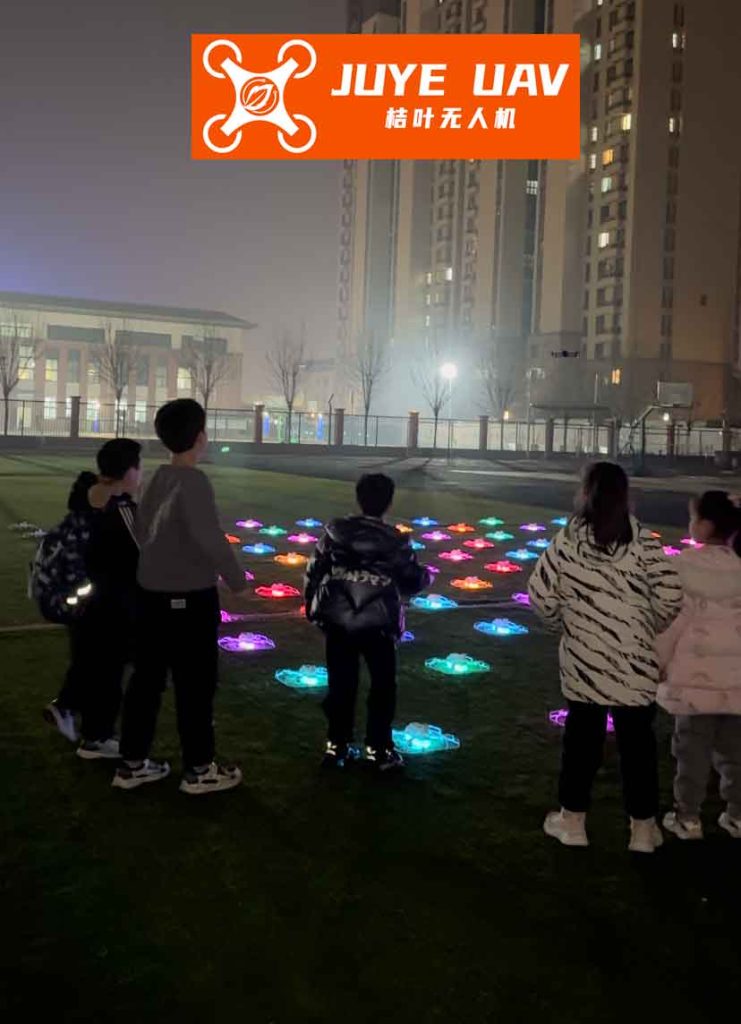

The HIL test bed also allowed for real-time visualization of formation drone light show patterns. Through the monitor box, I observed the follower’s flight path dynamically updating, with deviations plotted against the leader’s trajectory. This visual feedback is invaluable for debugging and optimizing controllers, as it mimics the spectator experience in actual formation drone light show events. For instance, when the follower encountered a side wind, its path briefly curved but corrected to form a smooth arc, illustrating the controller’s compensatory actions. Such insights help refine algorithms for better aesthetic outcomes in formation drone light show displays.

Discussion and Implications

The development of this HIL test bed has significant implications for the advancement of formation drone light show technologies. By integrating hardware and virtual components, it provides a realistic yet cost-effective platform for testing control algorithms under diverse conditions. This is particularly important for formation drone light show applications, where reliability and precision are critical to avoid collisions and ensure visual coherence. My experiments demonstrate that the test bed can replicate real-world challenges like wind disturbances and sensor failures, enabling thorough validation before field deployment.

One key advantage is the use of virtual prototypes for follower drones. This approach reduces the need for multiple physical drones, lowering costs and logistical complexity—a benefit for companies developing formation drone light show systems. The Statemate-based FCS-VP offers high flexibility, allowing rapid modification of drone parameters or environmental conditions. For example, I can simulate different formation shapes (e.g., circles, spirals) by adjusting the desired distances $d_f^c$, $d_l^c$, and $d_h^c$, and then test how controllers perform. This capability accelerates the design cycle for formation drone light show choreography, making it easier to innovate new patterns and effects.

Moreover, the test bed supports scalability. While my current implementation focuses on a leader-follower pair, the architecture can be extended to larger formations by adding more virtual prototypes or physical FCS units. This aligns with the trend in formation drone light show industries towards hundreds or thousands of drones flying in synchronized patterns. The ground test subsystem can manage data relay for multiple followers, ensuring coordinated communication. Future work could involve integrating wireless network simulations to test the impact of latency on formation drone light show performance, as real-time data exchange is vital for tight formations.

From a control theory perspective, the test bed validates the efficacy of dual-loop controllers for formation drone light show applications. The inner loop handles attitude stabilization, while the outer loop manages formation geometry based on the kinematic equations. The results show that this structure effectively minimizes tracking errors, even with external disturbances. However, there is room for improvement—for instance, incorporating adaptive control to handle varying aerodynamic effects in close formations. The HIL platform provides a safe environment to test such advanced algorithms without risking physical drones, thereby fostering innovation in formation drone light show control strategies.

Conclusion

In this article, I have presented a comprehensive hardware-in-the-loop test bed system for evaluating formation drone light show controllers. The system combines DSP-based flight control subsystems, Statemate virtual prototypes, and ground testing infrastructure to create a realistic testing environment. Through detailed mathematical modeling and extensive experiments, I have shown that the test bed can effectively validate controller performance under various scenarios, including disturbances and sensor failures. The results highlight the importance of proper formation spacing and robust control laws for successful formation drone light show applications.

This work contributes to the growing field of autonomous drone systems, particularly for entertainment and artistic displays. By providing a low-cost, flexible testing platform, it enables researchers and engineers to develop and refine formation drone light show technologies with greater confidence. Future directions include expanding the test bed to support more drones, integrating advanced sensors like LiDAR for obstacle avoidance, and exploring machine learning-based controllers for adaptive formations. Ultimately, I believe that such innovations will drive the evolution of formation drone light show performances, making them more dynamic, reliable, and captivating for audiences worldwide.

The HIL test bed not only serves as a validation tool but also as a research catalyst, encouraging experimentation with new algorithms and formations. As formation drone light show applications continue to grow in popularity, the need for rigorous testing systems will only increase. My hope is that this work inspires further development in this exciting area, blending engineering precision with artistic creativity to push the boundaries of what drones can achieve in the sky.