The dream of coordinating multiple unmanned aerial vehicles (UAVs) to act as a single, intelligent entity has long captivated researchers and engineers. While much of the published work remains confined to simulations, my research and practical experiments have focused on bridging this gap, moving algorithms from computer screens into the real, unpredictable sky. This journey, fundamentally about reliable multi-agent control, finds one of its most spectacular expressions in the modern formation drone light show. The principles of leader-follower coordination, robust communication, and precise outer-loop control that my team and I developed for a pair of small UAVs are the very bedrock upon which massive, synchronized formation drone light show fleets are built. This account details our methodological approach, system design, and experimental validation, framing it within the broader context of enabling complex aerial choreography.

The core challenge in multi-UAV coordination lies in defining a scalable and reliable control architecture. For our initial foray into physical experimentation, we adopted the well-established leader-follower pattern. This paradigm designates one UAV as the leader (or “long” vehicle), tasked with following a predefined trajectory. The other UAV, the follower (or “wing” vehicle), is responsible for maintaining a specific geometric relationship relative to the leader. This approach simplifies the high-level control problem by decomposing the fleet’s behavior: the leader defines the collective path, while followers manage relative positioning. This hierarchical decomposition is conceptually identical to managing a formation drone light show, where a virtual leader or central timeline defines the show’s macro-movements, and each drone acts as a follower to its assigned position in the evolving shape.

Geometric Foundation and Control Law Design

The first step was to mathematically define the follower’s task. We considered a three-dimensional space where the leader’s position is known. The control objective for the follower is to maintain a desired horizontal separation, $d_c$, and a desired vertical separation, $h_c$. The geometry of this setup is crucial. Let us define the relative position error in a coordinate frame attached to the follower. The leader’s position relative to the follower can be described by the relative coordinates $(x, y, z)$, where $x$ is the forward distance, $y$ is the lateral distance, and $z$ is the vertical distance. The dynamics of this relative motion are given by:

$$ \dot{x} = V_l \cdot \cos(\psi_e) + \dot{\psi}_l \cdot y – V_f $$

$$ \dot{y} = V_l \cdot \sin(\psi_e) – \dot{\psi}_l \cdot x $$

$$ \dot{z} = V_{lz} – V_{fz} $$

Here, $V_l$ and $V_f$ are the horizontal speeds of the leader and follower, respectively. $\psi_l$ and $\psi_f$ are their headings, with the heading error defined as $\psi_e = \psi_l – \psi_f$. $V_{lz}$ and $V_{fz}$ are their vertical velocities. All these states are provided in real-time by the onboard navigation system.

Our control strategy was designed using a cascade structure. The inner loops—controlling the drone’s attitude, throttle, and basic stability—were already developed and tested on a single vehicle. Our focus was the outer-loop, or guidance-level, controller for the follower. This controller takes the desired relative position $(d_c, h_c)$ and the current measured states to compute velocity, heading, and altitude commands for the follower’s autopilot. We designed Proportional-Integral (PI) controllers for each channel to ensure steady-state error elimination. The control laws were formulated as follows:

$$ V_{f\_cmd} = K_{xp} \cdot e_x + K_{xi} \int_{0}^{t} e_x \, d\tau $$

$$ \psi_{f\_cmd} = K_{yp} \cdot e_y + K_{yi} \int_{0}^{t} e_y \, d\tau $$

$$ h_{f\_cmd} = K_{zp} \cdot e_z + K_{zi} \int_{0}^{t} e_z \, d\tau $$

The composite error signals $e_x$, $e_y$, and $e_z$ combine both position and rate errors to improve damping and response. For instance, the forward error $e_x$ might combine the distance error with the relative speed error:

$$ e_x = K_d (x – d_c) + K_v (V_l \cos(\psi_e) – V_f) $$

Similar constructions apply to the lateral and vertical channels. The gains $K_{xp}, K_{xi}, K_{yp}, $ etc., were tuned empirically during simulation and initial ground tests. This method of generating direct velocity and heading commands is highly effective for small UAVs and is conceptually scalable. In a formation drone light show, each drone’s flight controller receives similar time-synchronized position or velocity commands, translated from the show’s choreography, to create the overall image or motion.

| Control Channel | Proportional Gain ($K_p$) | Integral Gain ($K_i$) | Primary Error Source |

|---|---|---|---|

| Forward Velocity | 0.8 | 0.05 | Relative distance & speed |

| Lateral Heading | 1.2 | 0.03 | Lateral offset & heading rate |

| Altitude | 1.5 | 0.07 | Vertical separation & climb rate |

The Nerve Center: A Dual-Port Ground Control System

The pivotal innovation that moved our project from simulation to reality was the development of a custom Ground Control Station (GCS) with dual independent communication links. In a true formation drone light show, drones often communicate via mesh networks or precise time-synchronized RF links. For our two-vehicle proof-of-concept, we implemented a centralized architecture where the GCS acts as the coordinating brain. It continuously receives telemetry from both drones, runs the cooperative control algorithm, and sends updated commands back to each vehicle.

We engineered the GCS software in C++, utilizing a multi-threaded serial port class to manage two simultaneous data streams. Each UAV transmitted a packed data structure at 5 Hz, containing its GPS position (latitude, longitude, altitude), attitude (roll, pitch, yaw), airspeed, and other sensor readings. The GCS software performed several critical functions:

- Dual-Port Data Acquisition: It listened on two virtual COM ports, distinguishing the data stream from Leader (UAV-1) and Follower (UAV-2).

- Data Integrity and Synchronization: Every data packet was protected with a 16-bit CRC checksum. Only packets with valid CRCs were processed. To address the fundamental issue of time alignment, we synchronized data based on the GPS UTC timestamp embedded in each packet. The control algorithm was executed using position and attitude data from both drones that shared the same UTC second, ensuring that calculated relative positions and derived commands were based on a consistent temporal snapshot.

- Algorithm Execution and Command Dispatch: The synchronized data was fed into the geometric model and PI control laws described earlier. The resulting velocity, heading, and altitude commands for the follower were then packaged and transmitted back to its specific radio link.

This centralized GCS-based approach, while not fully decentralized, proved the feasibility of the control laws and provided a robust testbed. Scaling to a formation drone light show of hundreds of drones requires moving this intelligence into a distributed or hybrid system, but the core requirement of reliable, synchronized state estimation and command generation remains unchanged.

Experimental Validation: From Ground Rolls to Free Flight

Before risking flight, we conducted extensive ground taxi tests. Two complete avionics suites were installed in a ground vehicle. Driving along a ~65 km route over 2684 seconds, we validated the entire hardware and software chain. The dual-port GCS successfully acquired, displayed, and logged data from both systems simultaneously. The consistency between the two navigation solutions—visible in their nearly-overlapping trajectory and heading plots—confirmed hardware reliability and software stability, even simulating GPS dropouts. This phase was critical for debugging the communication protocol and data synchronization logic without the consequences of a mid-air failure.

| Test Phase | Duration (s) | Distance (km) | Primary Objective | Outcome |

|---|---|---|---|---|

| Ground Taxi Test | 2684 | ~65 | Validate comms, GCS stability, hardware | Success; data streams stable and synchronous |

| Flight Test – Phase 1 | ~300 | ~8 | Altitude-separated circular tracking | Success; maintained horizontal formation |

| Flight Test – Phase 2 | ~405 | ~10 | Reduced separation & different radius tracking | Success; demonstrated adjustable formation parameters |

Emboldened by ground success, we proceeded to flight tests. We used two identical, internally-developed fixed-wing UAVs (wingspan: 3.5m, mass: 9kg). After manual take-off and climb, control was switched to the autonomous mode managed by our GCS. UAV-2 was designated the Leader, flying a pre-programmed circular orbit at 220m altitude with a 350m radius. UAV-1 was the Follower, tasked by our real-time algorithm to maintain a 350m horizontal distance but at a lower altitude of 150m.

The GCS interface operated flawlessly. The dual data streams provided real-time insight into both aircraft’s states. As the leader circled, the follower’s controller continuously adjusted its commands. The results, plotted post-flight, showed successful maintenance of the circular formation. The key performance metrics were derived from the recorded data. The horizontal distance control exhibited an error typically within ±30 meters, a respectable result given wind disturbances and the simplicity of the PI controller. The vertical separation control showed slightly larger deviations, around ±10 meters, partly due to atmospheric effects and the inherent coupling between airspeed and altitude in fixed-wing aircraft during turns.

In a second phase, we commanded a more complex maneuver: the leader remained at 220m altitude with a 350m radius, while the follower was instructed to fly at 210m altitude with a tighter 250m radius. This tested the controller’s ability to manage conflicting spatial constraints. The system performed adequately, maintaining the prescribed 3D spatial relationship with errors consistent with the first phase. The distance between the two vehicles varied as expected based on their different orbital radii, and the controller successfully maintained the follower on its assigned tighter circle relative to the leader’s position.

The table below summarizes the statistical performance of the formation controller during the flight test’s most stable orbiting period:

| Controlled Parameter | Desired Value | Mean Achieved Value | Standard Deviation | Max Error |

|---|---|---|---|---|

| Horizontal Distance (Phase 1) | 350 m | 345 m | 18 m | 52 m |

| Altitude Separation (Phase 1) | 70 m | 68 m | 6 m | 22 m |

| Follower Orbital Radius (Phase 2) | 250 m | 255 m | 15 m | 45 m |

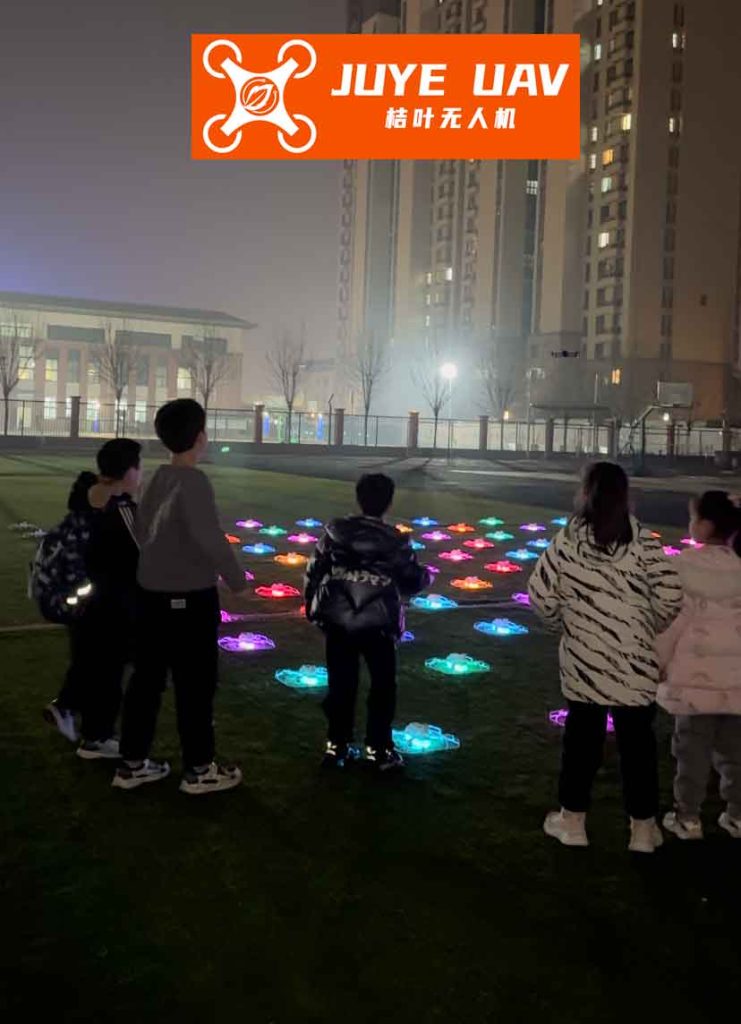

Scaling the Principles to a Formation Drone Light Show

The leap from controlling two UAVs to orchestrating a breathtaking formation drone light show involving hundreds or thousands is monumental, yet the fundamental principles we demonstrated remain directly relevant. A large-scale formation drone light show is essentially a massive, precise, real-time implementation of the leader-follower paradigm in a fully distributed or centrally-timed fashion.

First, the formation drone light show choreography is pre-computed as a series of time-stamped target positions for every single drone in the fleet. This is analogous to defining the precise desired $(x, y, z)$ for each follower relative to a virtual leader or a global clock. Second, each drone in the formation drone light show must know its state with high precision, typically using RTK-GPS for centimeter-level positioning and high-rate IMUs for attitude—a more advanced version of the sensor suite we used. Third, reliable communication is paramount. While our GCS used direct radio links, a large-scale formation drone light show employs robust mesh networks or broadcast timing signals to ensure every drone receives its command sequence without interruption, solving the synchronization problem at a much larger scale.

The control logic also evolves. Instead of a continuous PI controller reacting to another drone’s position, each drone in a formation drone light show often follows a pre-defined trajectory parameterized by time $t$: $p_{cmd}(t) = (x_{cmd}(t), y_{cmd}(t), z_{cmd}(t))$. The onboard controller’s task is to track this trajectory as accurately as possible, minimizing the error $e(t) = p_{cmd}(t) – p_{actual}(t)$. This can involve more advanced control techniques like Model Predictive Control (MPC) to account for dynamics and constraints:

$$ \min_{u} \int_{t}^{t+T} ||e(\tau)||^2_Q + ||u(\tau)||^2_R \, d\tau $$

$$ \text{subject to: } \dot{x} = f(x, u), \quad u_{min} \leq u \leq u_{max} $$

Here, $u$ represents the control inputs (motor speeds, attitude angles), and the cost function penalizes trajectory error and control effort over a future horizon $T$.

The challenges we observed—such as altitude coupling during turns and wind disturbances—are magnified in a formation drone light show. They are addressed through more sophisticated aerodynamic models, wind estimation and compensation algorithms, and rigorous pre-flight calibration and testing. The safety and redundancy requirements are also exponentially higher, necessitating fail-safe protocols, geofencing, and emergency landing strategies for every single unit in the massive formation drone light show.

Conclusion and Perspective

Our project successfully transitioned a leader-follower formation control algorithm from theoretical design to practical implementation and real-world flight testing. We developed and validated a dual-port Ground Control System that handled real-time data synchronization, state estimation, and closed-loop control for two autonomous fixed-wing UAVs. The experimental results confirmed the viability of the geometric control approach for maintaining 3D spatial relationships, achieving horizontal tracking errors within ~30 meters and vertical errors within ~10 meters under gentle wind conditions.

This work serves as a foundational case study in multi-agent UAV coordination. The core concepts—geometric relationship definition, outer-loop guidance law design, robust state synchronization, and reliable command dissemination—are not merely academic exercises. They are the essential engineering pillars supporting the awe-inspiring spectacle of a modern formation drone light show. The progression from our two-vehicle experiment to a choreographed fleet of hundreds highlights the path of technological maturation: increasing decentralization, enhancing individual agent intelligence and precision, and implementing ultra-reliable networking. The sky is no longer the limit for coordinated aerial systems, and the lessons learned from simple formations directly illuminate the path to creating complex, dynamic, and secure aerial displays and applications. The future of formation drone light show technology and, more broadly, autonomous swarms, hinges on continuing to ground advanced theory in the reality of flight test data and resilient system design.